About Us

Athletes.

Engineers.

Innovators.

Lorenzo Galli is a sports technology developer and the creator of Kinepose. With a background in computer science and a career in athletics, Lorenzo has always been fascinated by the intersection of sports and technology.

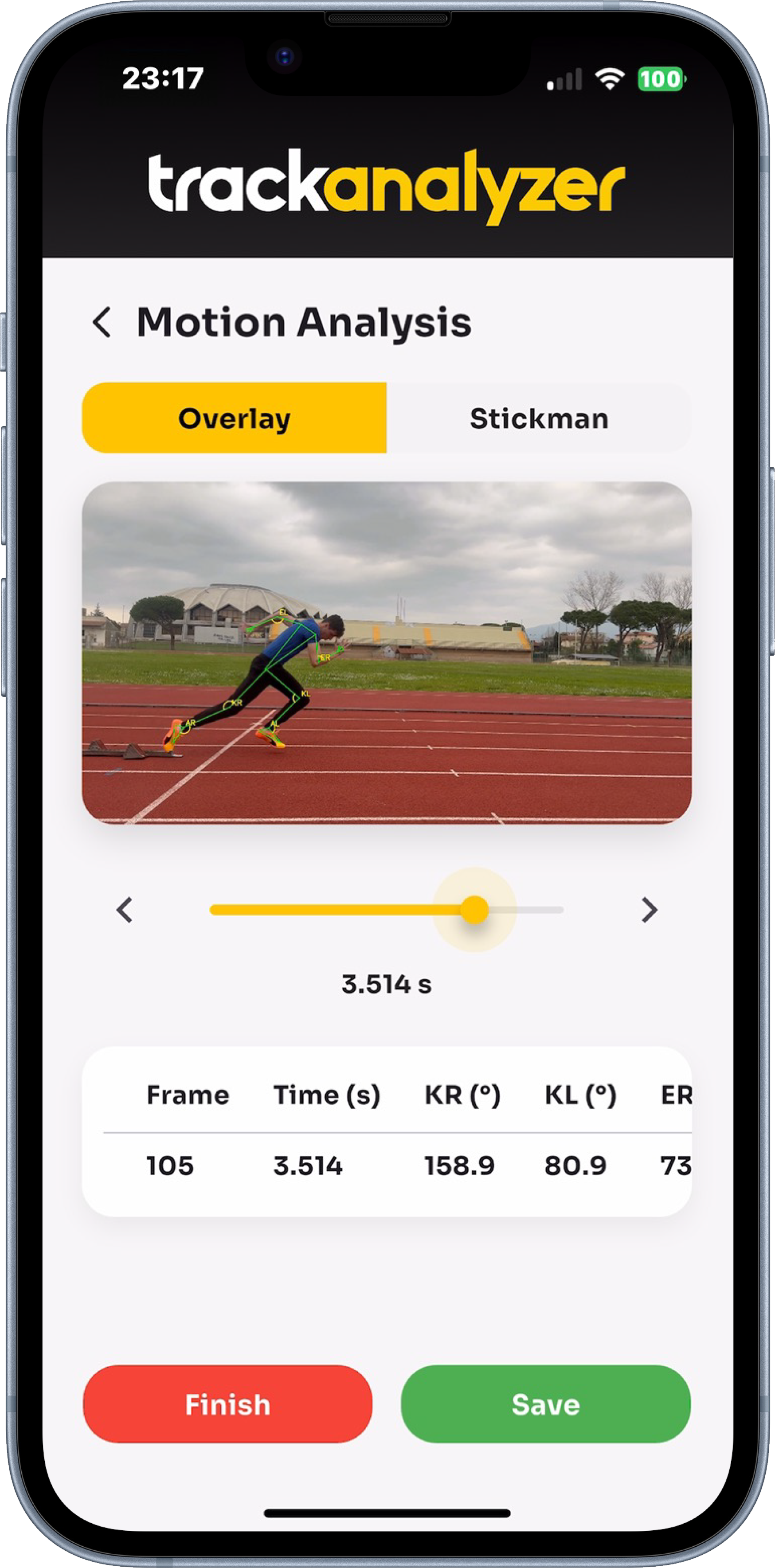

Kinepose was born from the author's original idea of addressing a simple frustration: high-level biomechanical analysis was

locked behind expensive lab equipment and specialist access.

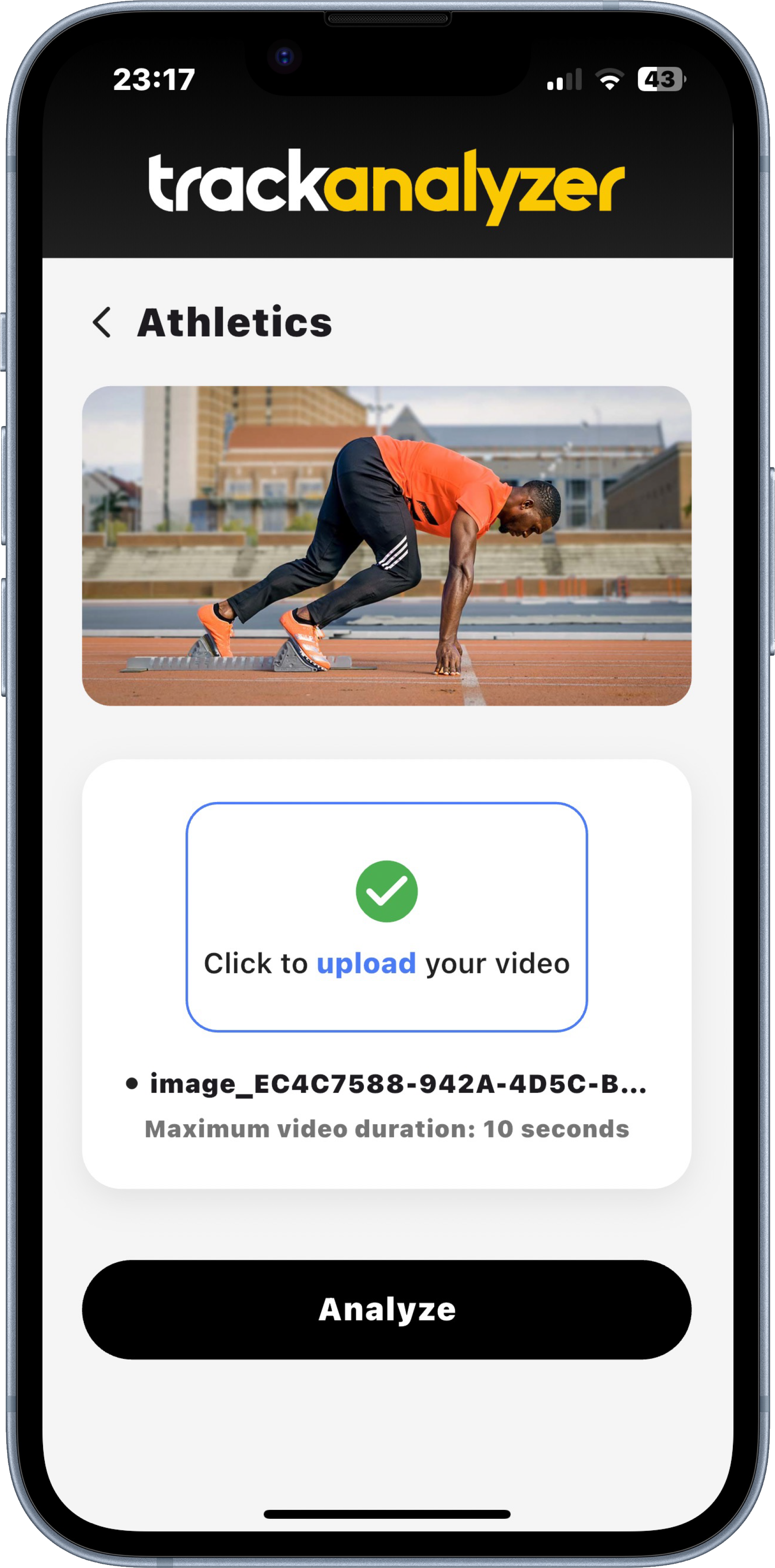

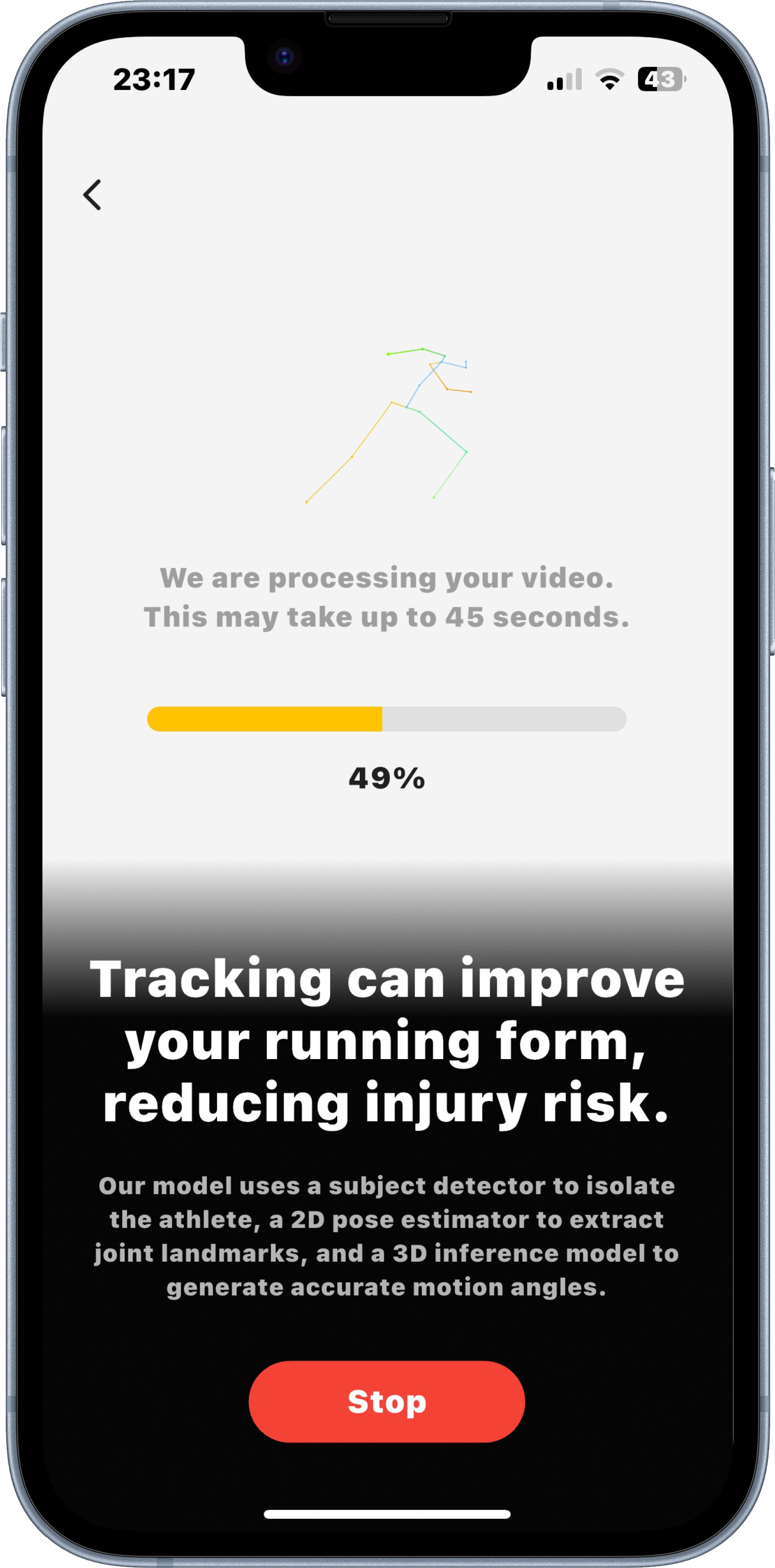

Kinepose originally started as "Track Analyzer", a project led by Lorenzo Galli and developed during Samsung Innovation Campus 2025 in cooperation with Fabio Gulmini, Martina Capobianco and Alessio Musto. Building on that foundation, Kinepose further advances this vision through computer vision and AI, with special regard for the sport of athletics. The main goal is to give coaches and athletes high-quality data without the need of lab environments. As today, Kinepose remains a beta software, and Lorenzo Galli offers consultancy services for teams and athletes interested in using the technology for performance analysis and training optimisation.

1st Samsung Innovation

Campus 2025

AI Powered Motion

Pipeline

0 Extra hardware

needed